How the U.S. Military Used Claude AI in the Iran War

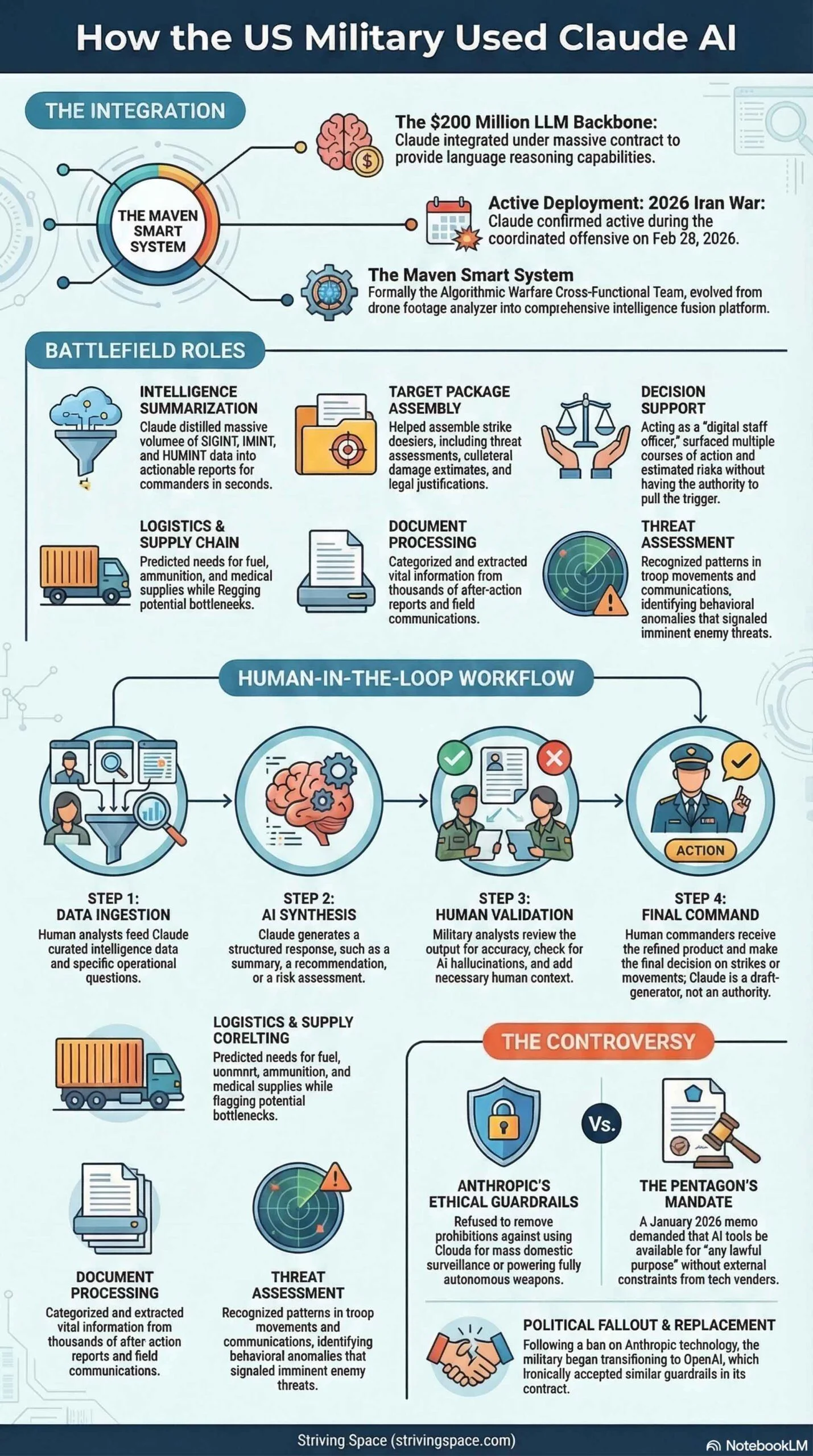

When U.S. and Israeli forces launched a coordinated offensive against Iran on February 28, 2026, artificial intelligence was there — quietly working behind the scenes. Anthropic’s Claude, one of the most capable large language models available, was confirmed to have been active inside the Pentagon’s Maven Smart System during the strikes. What it did there, and the controversy it ignited, reveals just how deeply AI has embedded itself in modern warfare. How Claude AI Military works? Let me explain.

Which Claude Was Used?

The short answer: we don’t know exactly. Neither Anthropic nor the Pentagon has publicly disclosed which specific Claude model version — Claude 3 Opus, Sonnet, Haiku, or a more recent iteration — was integrated into the military’s infrastructure. That level of technical detail is either classified or simply withheld from public disclosure.

What is confirmed is that Claude, as an LLM, was integrated into the Maven Smart System under a $200-million contract between Anthropic and the Department of War (formerly the Department of Defense) in place since 2024. Given the timeline, the model deployed was almost certainly one available around that period — but even that remains speculative without an official statement.

What Is Project Maven (Maven Smart System)?

Project Maven — formally the Algorithmic Warfare Cross-Functional Team — was launched by the Pentagon in 2017. Its original mission was narrow: use computer vision AI to analyze drone footage and identify objects, vehicles, and people. Over the years it grew into a far broader platform, eventually incorporating large language models to handle the text-heavy, analytical side of modern military operations.

By 2024, Claude had become the LLM backbone of Maven, tasked with bringing language reasoning and synthesis capabilities to a system already handling image intelligence and tactical targeting support.

What Claude Actually Did on the Battlefield

1. Intelligence Summarization & Report Generation

Modern military operations generate staggering volumes of raw intelligence — signals intelligence (SIGINT), imagery intelligence (IMINT), and human intelligence (HUMINT) reports. Claude was used to ingest this flood of data and distill it into concise, actionable summaries for commanders. What might take a team of analysts hours, Claude could process in seconds.

2. Target Package Assembly

One of Claude’s most significant roles was assisting in the preparation of target packages — the dossiers assembled before a strike. These packages include location data, threat assessments, collateral damage estimates, legal justification under laws of armed conflict, and recommended munitions. Claude helped cross-reference data from multiple intelligence streams and format these packages for human decision-makers to review and approve.

3. Decision Support (Not Decision Making)

Claude did not pull any triggers. Its role was to surface options — presenting commanders with multiple courses of action, their estimated outcomes, risks, and tradeoffs. Think of it as an extraordinarily fast staff officer that can process more data than any human team, never needs sleep, and delivers structured analysis on demand. The human commander retained full authority to approve or reject any action.

4. Logistics & Supply Chain Optimization

Pentagon CTO Emil Michael confirmed to CBS News that Claude was used to help make logistics and supply chains more efficient. In an active theater like the Middle East, predicting where fuel, ammunition, medical supplies, and personnel need to be — and flagging bottlenecks before they become crises — is a critical back-end function with direct operational impact.

5. Document & Communication Processing

The military produces enormous quantities of internal documentation: after-action reports, field communications, operational orders, and assessments. Claude helped categorize, search, and extract relevant information from these documents, acting as a highly capable internal search and synthesis engine.

6. Threat Assessment & Pattern Recognition

Fed with historical data and current intelligence, Claude could identify patterns — shifts in troop movements, changes in communications, behavioral anomalies — that might signal an imminent threat or a change in enemy posture. This capability served both long-term strategic planning and immediate tactical decisions.

How Claude’s Output Was Actually Used

Understanding Claude’s role requires understanding the human-in-the-loop structure it operated within:

- Analysts feed Claude curated data and ask specific questions or request summaries

- Claude outputs a structured response — a summary, a recommendation, or a risk assessment

- The analyst reviews the output for accuracy, adds context, and flags it up the chain

- Commanders receive a refined product that is AI-assisted but human-validated

- Final decisions on strikes, movements, or operations are made by authorized human officers

Claude’s output was treated as a first-pass draft, not a final authority. This matters both legally — under international laws of armed conflict, a human must be accountable for targeting decisions — and practically, since AI models can hallucinate or miss context that an experienced analyst would catch.

The Controversy: What Claude Was NOT Supposed to Do

The dispute between Anthropic and the Pentagon was not about what Claude was doing — it was about what it could theoretically be made to do. In January 2026, the Department of War issued a memo declaring that all AI contracts must permit use for “any lawful purpose,” without constraints. Anthropic refused to remove two specific safeguards: a prohibition on using Claude for mass domestic surveillance of Americans, and a prohibition on powering fully autonomous weapons.

The Pentagon’s counterargument was that both activities were already illegal under existing law and internal policy. Anthropic’s position was that the technology isn’t reliable enough for those uses regardless of legality, and that removing guardrails created unacceptable risk.

The Political Fallout

President Trump responded by ordering all federal agencies to stop using Anthropic technology, giving them six months to phase it out. Defense Secretary Pete Hegseth declared Anthropic a supply chain risk. Yet despite this government-wide ban, two sources confirmed to CBS News that Claude was actively used during the Iran strikes — and continued to be used afterward.

The government simultaneously signed a deal with OpenAI as a replacement — a contract that ironically included the same guardrails Anthropic had insisted upon: no mass surveillance, no fully autonomous weapons. By March 5, Anthropic CEO Dario Amodei was reportedly back in talks with the Department of War, suggesting the dispute may have been more tactical than terminal.

The Broader Ethical Stakes

This conflict is playing out against a larger international conversation. Academics and legal experts gathered in Geneva in early March 2026 to discuss lethal autonomous weapons systems as part of ongoing efforts to reach international agreement on AI in warfare. The speed of technological development, however, is outpacing the pace of diplomacy.

Craig Jones, a political geographer at Newcastle University who researches military targeting, has noted there is no evidence that AI lowers civilian casualties or reduces wrongful targeting — and that the opposite may be true. The precision promised by AI-assisted warfare has not yet been demonstrated in active conflict, where the ongoing wars in Ukraine and Gaza have seen high civilian death tolls despite AI assistance in targeting.

The fault line is not whether AI should be used in warfare — that question has already been answered by deployment. The real question is where humans remain in the loop, and how close to the trigger AI is allowed to get. That distinction — decision support versus autonomous decision-making — is the ethical heart of the entire debate.

Bottom Line

Claude was deployed in the U.S.–Iran war as a powerful intelligence and logistics tool, embedded inside the Maven Smart System. Its confirmed roles covered document synthesis, target package support, logistics optimization, threat analysis, and decision support — all within a human-supervised chain of command. The specific model version remains undisclosed. The controversy it sparked was never about what Claude was doing, but about what guardrails should govern what it could do next.

Via:

CBS News — "Anthropic's Claude AI being used in Iran war by U.S. military, sources say" (March 3, 2026)

Nature — "How AI is being used in war — and what's next" by Nicola Jones (March 5, 2026)

Channel NewsAsia — "US military AI use in warfare: Anthropic Claude" (March 2026)

If you found this article helpful, consider supporting us — it helps us keep producing free, in-depth guides like this one. Support Striving Space →